The moment I saw Microsoft drop Foundry Local, my first thought was finally, someone is taking local inference seriously for JavaScript developers. Not Python first, not C# first. Well okay, those too. But the JS/TS SDK actually works and that is saying something.

What Foundry Local actually is

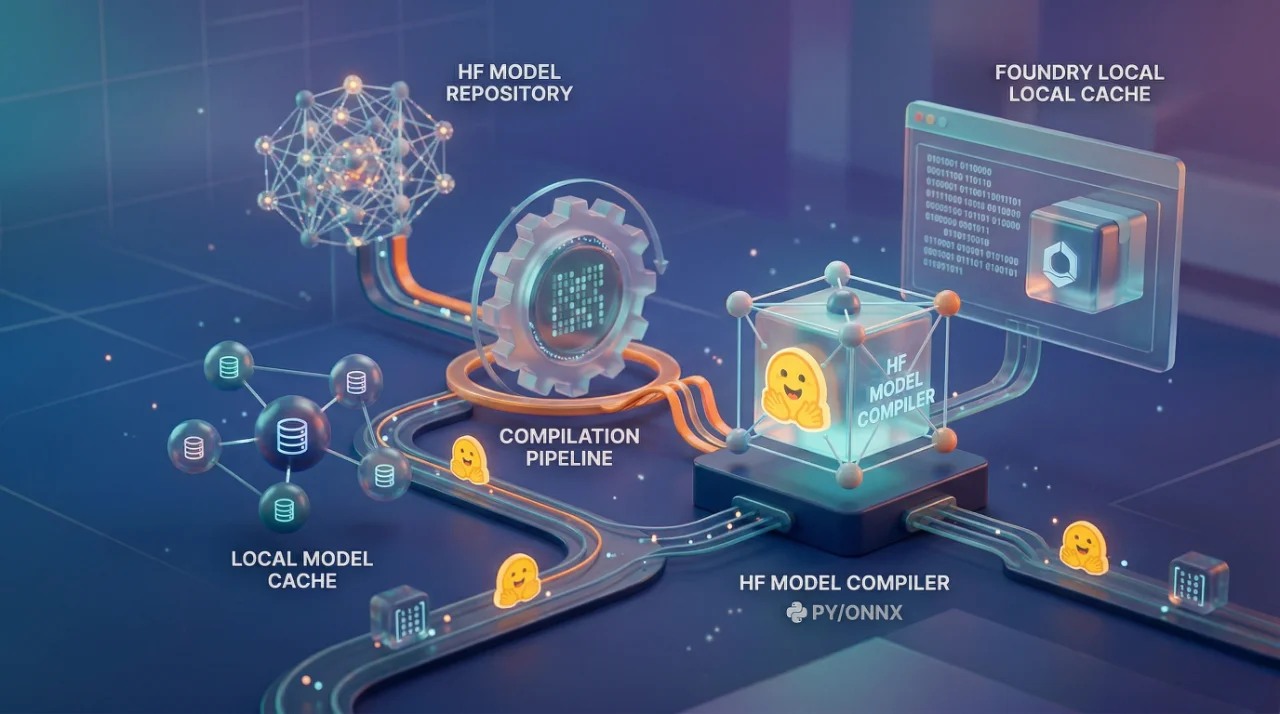

Foundry Local is basically a local REST server that runs AI models on your machine. No cloud calls. No API keys that cost you money every time someone hits enter. It spins up an OpenAI-compatible endpoint on localhost and you talk to it using the same OpenAI SDK you already know. That last part is the important bit you don't have to learn a new SDK pattern. If you've written openai.chat.completions.create() before, you already know 80% of what you need.

The setup for JavaScript is straightforward. You need Node.js 20+, create a project, and install two packages foundry-local-sdk and openai. On Windows there is a --winml flag during install that gives you better hardware acceleration. On Mac and Linux you skip that flag. That's it.

The code pattern is: initialize a FoundryLocalManager with a config, pick a model by alias like qwen2.5-0.5b, download it, load it, start the web service, and then just use the OpenAI SDK pointing to http://localhost:5764/v1. The model downloads on first run which can take a few minutes but after that it is cached. I appreciate this because I have been in situations where demo breaks because model wasn't cached and everyone is staring at a progress bar. At least the SDK gives you a download progress callback so you can show something to user.

Javascript

const manager = FoundryLocalManager.create({

appName: 'my-app',

logLevel: 'info',

webServiceUrls: 'http://localhost:5764'

});

const model = await manager.catalog.getModel('qwen2.5-0.5b');

await model.download();

await model.load();

manager.startWebService();

After this you just use OpenAI SDK with baseURL pointing to localhost. The API key is literally 'notneeded'. I like that they kept that transparent instead of making you generate some fake token.

Where this gets interesting and where it breaks

The real value here is for apps that need to work offline or in environments where you cannot send data to cloud. Think healthcare, legal document processing, government. I had a client last year who needed on-premise everything no exceptions. We ended up building a whole custom ONNX Runtime wrapper. If Foundry Local existed back then, we would have saved three weeks easily.

But here is the thing nobody talks about the model selection is limited. Right now you're working with smaller models like Qwen 2.5 0.5B. That is a tiny model. Good for simple tasks, summarization, basic Q&A. But if you're expecting GPT-4 level reasoning running on your laptop, that is not happening. The alias system is smart though it picks the best variant for your hardware automatically. So if you have a decent GPU it will use that, if not it falls back to CPU. I tested this on a Surface Pro and it worked, just slow. On my workstation with a 4070, much better.

One thing that bothered me the cleanup step. You have to manually call model.unload() and manager.stopWebService(). If your app crashes before that, you've got orphaned processes. In production web app this is problem. You need to handle that with proper signal handlers, process exit hooks, all of that. The docs don't mention this at all.

Also the fact that this is still in preview. I mean, the docs literally say "features, approaches, and processes can change." So building production app on this today is a risk. For prototyping and internal tools? Go ahead. For shipping to customers? I would wait.

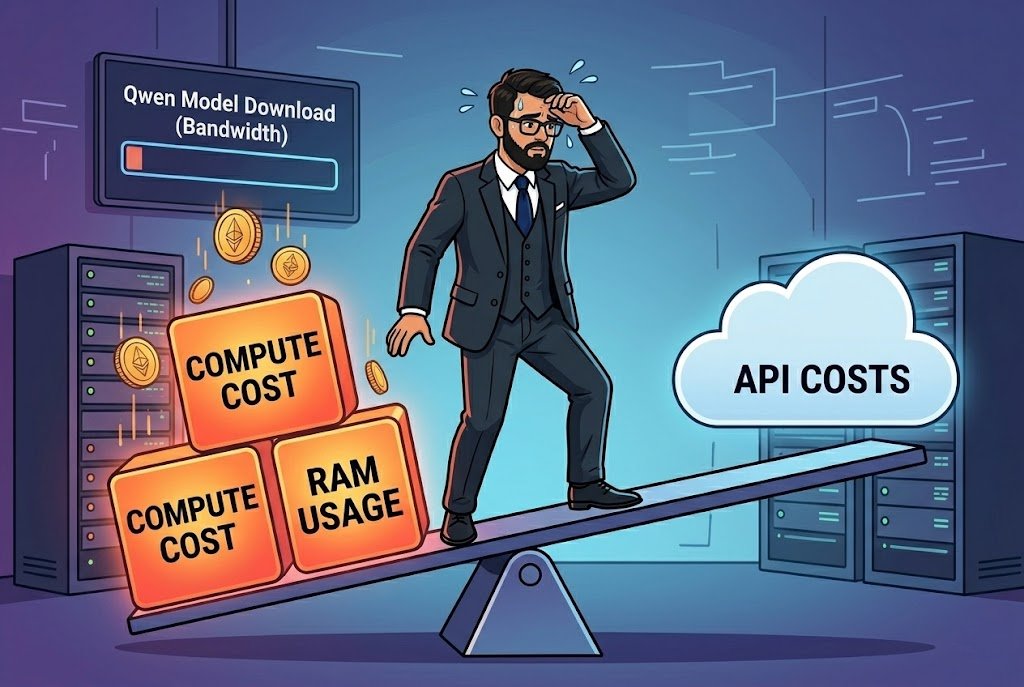

The cost nobody warns you about

People hear "local" and think "free." It is free in terms of API costs, yes. But the compute cost shifts to user's machine. That Qwen model needs to be downloaded that's bandwidth. It needs RAM to run. It needs CPU or GPU cycles. For a web app where you're running this on a server, your infrastructure cost goes up. For desktop or Electron apps where it runs on user machine, now you need minimum hardware requirements. Either way somebody is paying.

The OpenAI compatibility is a double-edged thing too. Yes it means you can swap between local and cloud easily same code, different baseURL. That is genuinely useful for hybrid architectures where you do simple stuff locally and send complex queries to cloud. But it also means you inherit OpenAI SDK's quirks and limitations. Streaming works fine, I tested it with the fetch API approach too and that was clean enough.

What I would actually recommend use Foundry Local for the development loop. Run models locally while building, test your prompts, iterate fast without burning API credits. Then deploy with cloud models for production. The code barely changes. That alone makes this worth learning even if you never ship the local part to users.

The JS/TS SDK is at version 0.9.0 which tells you exactly where Microsoft thinks this is almost ready but not quite.