Honestly the hardest part about shipping AI agents is not building them. It's knowing if they actually work. I mean work in the real sense not "it gave me a decent answer once in the playground" but consistently, safely, at scale. Microsoft Foundry now has a proper evaluation pipeline for agents and I've been messing with it for the last few weeks. Some thoughts.

What Foundry actually gives you here

So the setup is straightforward. You install the azure-ai-projects SDK (version 2.0+), authenticate with DefaultAzureCredential, and point it at your Foundry project endpoint. Standard Azure stuff. Nothing surprising. The interesting part is the evaluator system.

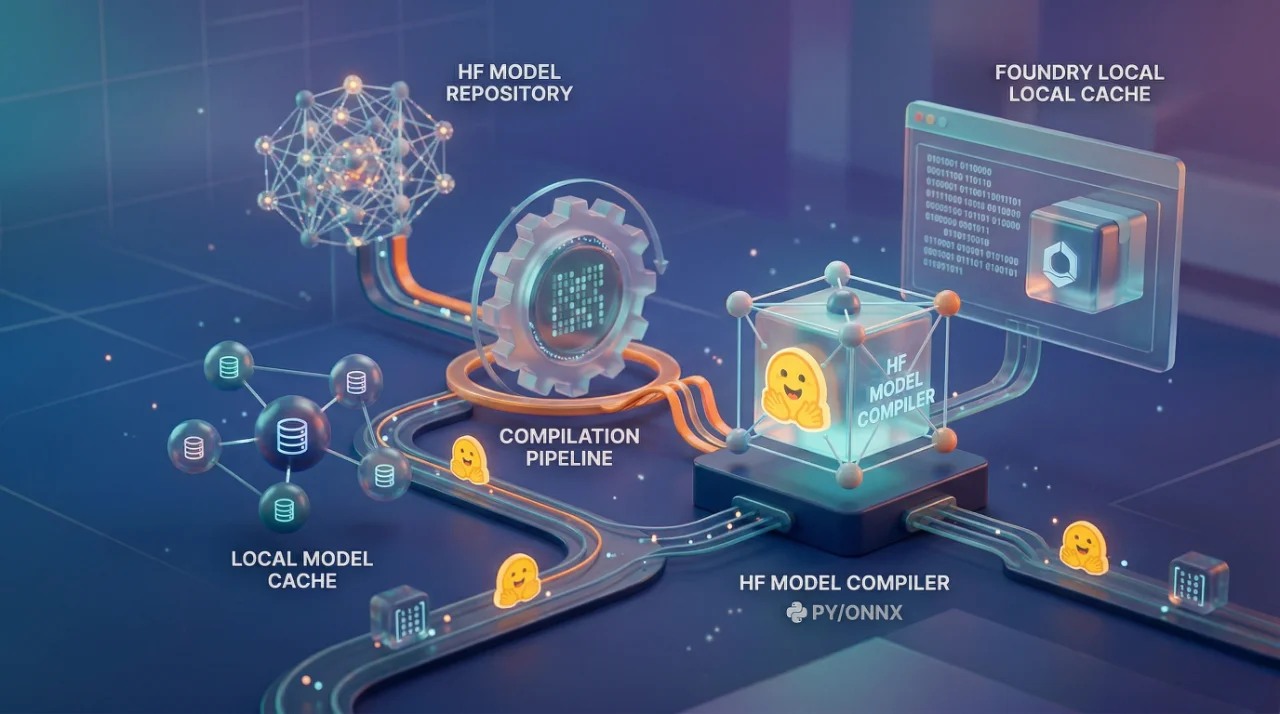

Foundry ships with built-in evaluators that fall into a few categories. You got your quality evaluators things like Coherence, which checks if the response is logical and well-structured. You got safety evaluators for violence, harmful content, that kind of thing. And then the agent-specific ones, which is where it gets interesting. Task Adherence is the big one. It literally checks whether your agent followed its system instructions. Think about that for a second. You can now programmatically test if your agent is doing what you told it to do.

There's also text similarity evaluators if you have ground truth answers, and you can build custom evaluators on top. The whole thing runs as an evaluation pipeline you create a JSONL file with test queries, upload it as a dataset, configure your evaluators with data mappings, and kick off a run. The service sends each query to your agent, captures the full response including tool calls, and scores everything.

One thing I really like, the data mapping system uses templates like {{sample.output_items}} for the full agent response with tool calls, and {{sample.output_text}} for just the text. So your Task Adherence evaluator can look at the complete agent behavior, not just the final message. That matters. A lot of agent failures happen in the tool calling layer, not the text output.

Where this breaks down in practice

Here's what the docs don't really prepare you for. The AI-assisted evaluators: Task Adherence, Coherence, they need their own model deployment. You're pointing a GPT-4o at your agent's output and asking it to judge. Which means you're paying for the evaluation model on top of your agent model. We ran a batch of 200 test queries on one of our agents at OZ and the evaluation itself consumed almost 10,000 tokens per query when you include the judge model's reasoning. At scale that adds up fast. Nobody budgets for this.

Also the regional restrictions are real. Some evaluation features only work in certain Azure regions, and if your Foundry project is in the wrong one, you just can't use certain safety evaluators. Found this out the hard way when we set up a project in Southeast Asia and half the evaluators were unavailable. The docs mention it in a tiny note. Should be in bold.

The polling mechanism for results is also basic. You're literally doing a while True loop with time.sleep(5). For a CI/CD integration this works fine, but I expected something more event-driven from a 2026 platform. Maybe I'm spoiled.

The part that actually matters

The real value is in the workflow integration, not the one-off evaluation. Foundry lets you plug this into GitHub Actions as a quality gate. So your PR doesn't merge unless your agent passes, say, 85% Task Adherence. That is genuinely useful. We implemented this for a client project where the agent handles insurance claims, you cannot ship an agent that ignores its instructions 20% of the time when money is involved. The evaluation gate caught three regressions in the first week that would have gone to production.

The compare runs feature is also good. You adjust your agent's system prompt, rerun evaluation, and get a side-by-side. We found that adding two sentences to a system prompt improved Task Adherence from 71% to 89% on the same test set. Without the evaluation framework we would have been guessing.

Row-level output gives you the evaluator's reasoning too, not just pass/fail. So when Task Adherence fails, it tells you why, "agent did not use the required tool before responding" or whatever. That reasoning is worth more than the score itself because it tells you what to fix.

Look, is this perfect? No. The test dataset creation is manual and tedious. There's no synthetic test generation built in yet, which feels like an obvious gap. You need an Azure OpenAI deployment specifically, can't use other providers as judges. And the whole thing is tightly coupled to Foundry agents, if your agent is built with LangChain or some other framework, you're writing adapters or using the general evaluation SDK instead.

But for teams already in the Azure ecosystem building Foundry agents, this is the first time evaluation feels like a real engineering practice instead of vibes-based testing. I'll take it.