Most LangGraph tutorials show you a single graph. One neat, clean, linear flow — input goes in, nodes do stuff, output comes out. And that works fine until you have a task that needs to do five things at once and then combine the results. That's where orchestrator-worker pattern comes in, and honestly, I think most people overcomplicate the explanation.

The idea is dead simple. You have one node — the orchestrator — that looks at a task, breaks it into pieces, and farms those pieces out to worker sub-graphs. Workers do their thing in parallel, send results back, and the orchestrator synthesizes everything into one final output. Delegate, parallelize, synthesize. That's it. That's the whole pattern.

The reason this matters is because LLM calls are slow. If you have a complex task that requires, say, researching three different topics, summarizing a document, and checking facts — doing that sequentially means you're waiting for each call to finish before the next one starts. With orchestrator-worker, you fire all of them at the same time. We tested this at OZ on a contract analysis pipeline. Sequential processing took about 45 seconds per document. After moving to orchestrator-worker with parallel sub-graphs, we got it down to 14 seconds. Same model, same prompts, just better architecture.

How it actually works in LangGraph

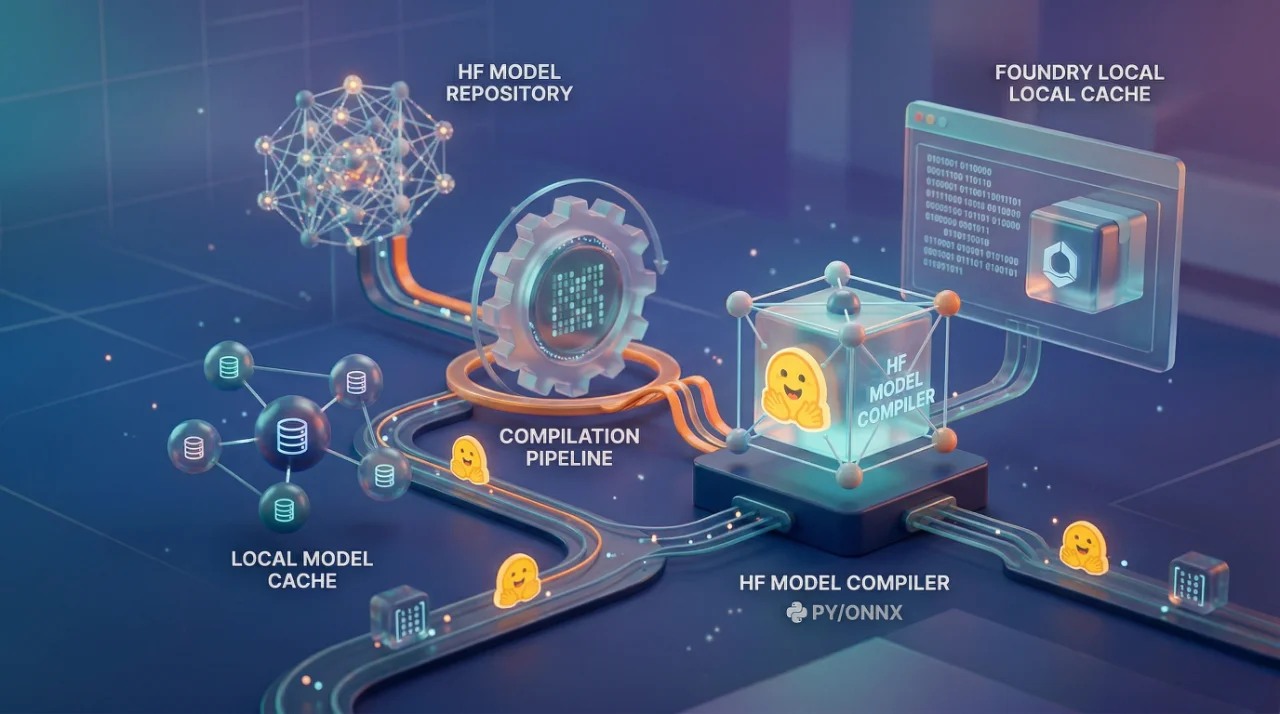

In LangGraph, you build this using sub-graphs. Each worker is its own StateGraph with its own state schema, its own nodes, its own edges. The orchestrator is another StateGraph that sits on top. When a task comes in, the orchestrator node runs a planning step — usually an LLM call that breaks the task into sub-tasks — and then sends each sub-task to a worker graph.

The tricky part is state management. Your orchestrator state needs to track which workers have been dispatched, which have returned, and what their results look like. LangGraph handles this through Send() API — you return a list of Send objects from your orchestrator node, each one targeting a worker node with its own payload. Workers run in parallel, and results get collected back into orchestrator state.

Something like this in practice: You define a worker graph that takes a sub-task, processes it, returns a result. Then your orchestrator graph has a node that calls the LLM to decompose the task, a fan-out step using Send(), and a final synthesis node that takes all worker outputs and combines them. The Send() API is doing the heavy lifting here — it's what enables the parallel execution without you managing threads or async manually.

One thing that tripped us up early — your worker sub-graphs need to be truly independent. If worker B needs output from worker A, you don't have an orchestrator-worker pattern anymore. You have a pipeline pretending to be parallel. I've seen teams build this wrong and then wonder why performance didn't improve. The workers were waiting on each other through shared state. Defeats the whole purpose.

Where this actually breaks

The pattern is great until your orchestrator's planning step is bad. And I mean, this is the single point of failure nobody talks about enough. If your LLM breaks a task into wrong sub-tasks, or misses a sub-task entirely, your workers will execute perfectly on the wrong things. Garbage in, garbage out — but now it's parallel garbage, which is somehow worse because you burned more tokens getting there.

I've also seen cost blow up fast. Each worker is making its own LLM calls. If your orchestrator breaks one task into six sub-tasks and each worker makes two LLM calls, that's twelve calls for what used to be maybe three or four in a sequential setup. The latency is better but the cost is higher. You need to be honest about this tradeoff with whoever is paying the bill.

Error handling is another headache. What happens when one worker fails? Do you retry? Do you return partial results? LangGraph gives you checkpointing and retry mechanisms, but you have to actually build that logic. Default behavior is not very forgiving.

The pattern works best for tasks that are naturally decomposable — multi-document analysis, parallel web research, comparing multiple options, generating different sections of a report simultaneously. It does not work well for tasks where the decomposition itself is ambiguous. If the LLM can't reliably figure out what the sub-tasks should be, you're better off with a simpler sequential chain and some good prompt engineering.

One more thing — testing these systems is painful. You can't just unit test a single node anymore. You need integration tests that verify the orchestrator decomposes correctly, workers execute correctly, and synthesis combines correctly. Three layers of potential failure. We ended up building a small evaluation harness just to test our orchestrator's decomposition quality separately from everything else, and that alone took a week.

People reach for this pattern too early. Start with a simple graph. When you hit a wall where latency is unacceptable and your tasks are clearly parallelizable, then refactor into orchestrator-worker. Not before.