The part nobody explains properly

Most people hear "Azure Machine Learning Pipelines" and think it is just another way to run training scripts. It is not. I mean, technically yes, you can use it to just run a training job. But that is like buying a truck to carry groceries. The whole point is that it breaks your ML workflow into steps data prep, training, evaluation, deployment and each step becomes its own manageable component. You build once, you reuse everywhere. The system handles dependencies between steps automatically. That is the actual value.

I have been working with Azure ML for a while now, and the thing that clicked for me was when we had a project at OZ where three different people were touching the same pipeline. Data engineer was handling ingestion, I was doing the model training part, and another guy was writing the evaluation logic. Without pipelines, this would have been a nightmare of "don't push yet, I am still editing the script." With pipelines, each person owns their step, builds it as a component, and we stitch it together. Nobody steps on anyone's toes. That alone saved us probably two weeks of back-and-forth on a three month project.

Now here is what Microsoft docs will tell you pipelines standardize MLOps practices and support scalable team collaboration. Fine. True. But what they don't emphasize enough is the cost angle. Pipelines can reuse outputs from steps that haven't changed. So if your data prep step produced the same result as last run, it skips it. It caches. This is not a small thing when you are paying for compute by the minute on Azure. We had a pipeline where data preprocessing took 45 minutes on a Standard_DS3_v2. After the first run, subsequent runs skipped that step entirely if input data didn't change. That is real money saved.

Three ways to build, pick one and stick with it

You can build pipelines using the CLI v2, the Python SDK v2, or the Designer UI. My honest recommendation if you are a developer, go with Python SDK. The azure-ai-ml package lets you define everything in code, version it, put it in git. The Designer is nice for demos and for people who are not comfortable writing code, but I have never seen a production team use it seriously. The CLI approach with YAML definitions sits somewhere in between. Good for CI/CD integration but writing YAML for ML workflows gets painful fast. I tried it for one project, went back to Python SDK within a week.

One thing worth knowing SDK v1 is deprecated as of March 2025. Support ends June 2026. If you are still on azureml-sdk, start migrating now. Not tomorrow, now. The v2 SDK is a different package entirely, azure-ai-ml, and the API surface changed a lot. Migration is not a find-and-replace job.

Where people get confused

There is Azure ML Pipelines, Azure Data Factory pipelines, and Azure DevOps Pipelines. Three different things with the same name. Microsoft loves this.

Azure ML Pipelines are for model orchestration, data goes in, model comes out.

Data Factory is for data orchestration, moving and transforming data at scale.

Azure DevOps Pipelines are for CI/CD, code goes in, deployed app comes out.

The overlap between ML Pipelines and Data Factory is where teams waste the most time arguing. My rule is simple: if the output is a trained model, use ML Pipelines. If the output is a transformed dataset that feeds into a warehouse, use Data Factory. Some people try to do everything in one, and it never ends well.

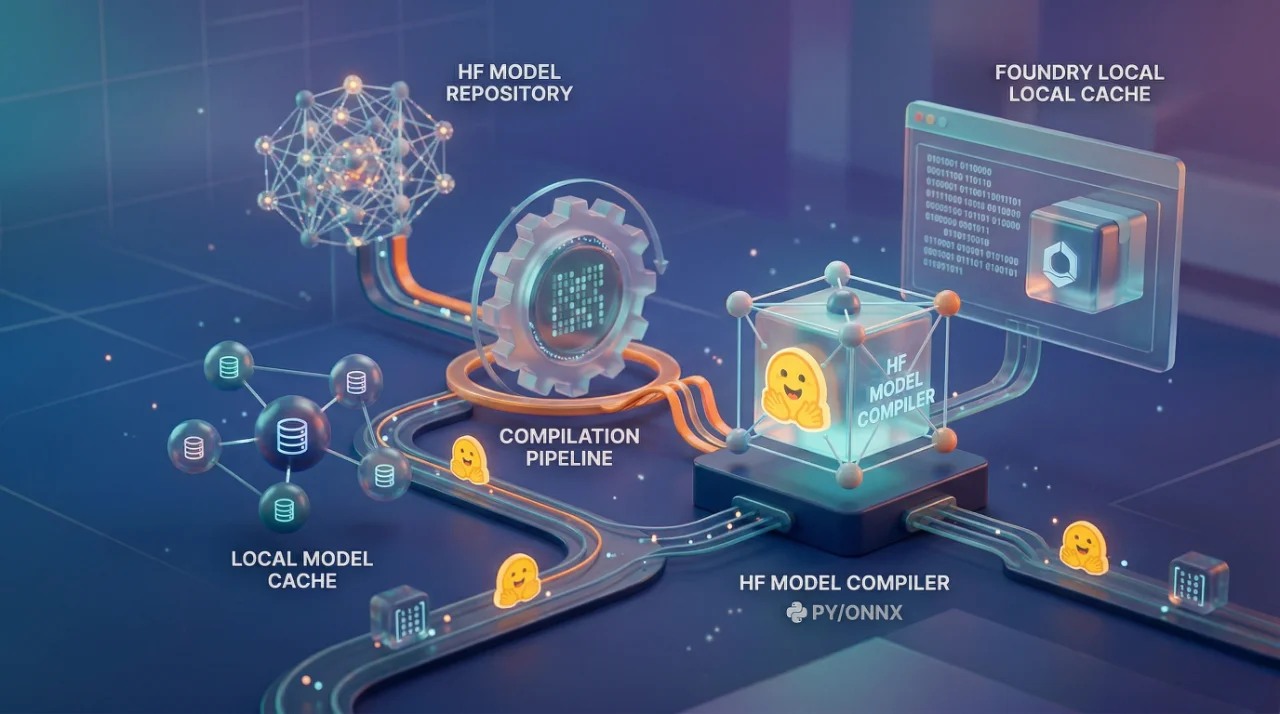

The component-based architecture is genuinely good though. You define a component once say, a data cleaning step with specific inputs and outputs and you can drag it into any pipeline. Different teams can maintain a shared library of components. This is where it starts feeling like actual software engineering instead of notebook chaos. And honestly, that is the whole promise of MLOps. Stop treating ML code like it is special. It is software. Version it, test it, deploy it like everything else.

One last thing if you are evaluating this against Kubeflow Pipelines, which is the open source alternative, Azure ML Pipelines wins on managed infrastructure and caching but loses on portability. Kubeflow runs anywhere Kubernetes runs. Azure ML locks you into Azure. For most enterprise teams I have worked with, that lock-in is acceptable because they are already on Azure for everything else. But if multi-cloud is your thing, think carefully before committing.